Link-checking with generative AI

I made a mistake this week:

- My CloudFront distribution and Bear started interacting in a way that created an infinite loop that kept natemeyvis.com from rendering.1

- I solved this by just removing the CloudFront distribution, because Bear does caching and other useful things without needing CloudFront.

- But this broke links on my home page that pointed to

...my-page.htmland were getting the.htmlstripped off at the edge by CloudFront.

Total rookie move! I'd benefit from thinking through its causes carefully (it's not easy to write on the Internet for a decade without changing URL conventions or breaking links), but this is not that post. This is about building a tool to prevent future instances of this mistake.

First, at the risk of repeating myself, here are some striking facts about taking on this project:

- Rather than figure out if there's an external tool that suits me here, I went right to making my own tool...

- ...largely because of two contradictory-seeming facts: I only need a very simple set of behaviors now, but might want strange-shaped sets of behaviors later.

- And, of course, because I'm doing a sustained bootstrapping project.

- It's getting to the point where it's not so much slower to make my own tool than it is just to sign up and configure a third-party tool (though there's plenty of variance here).

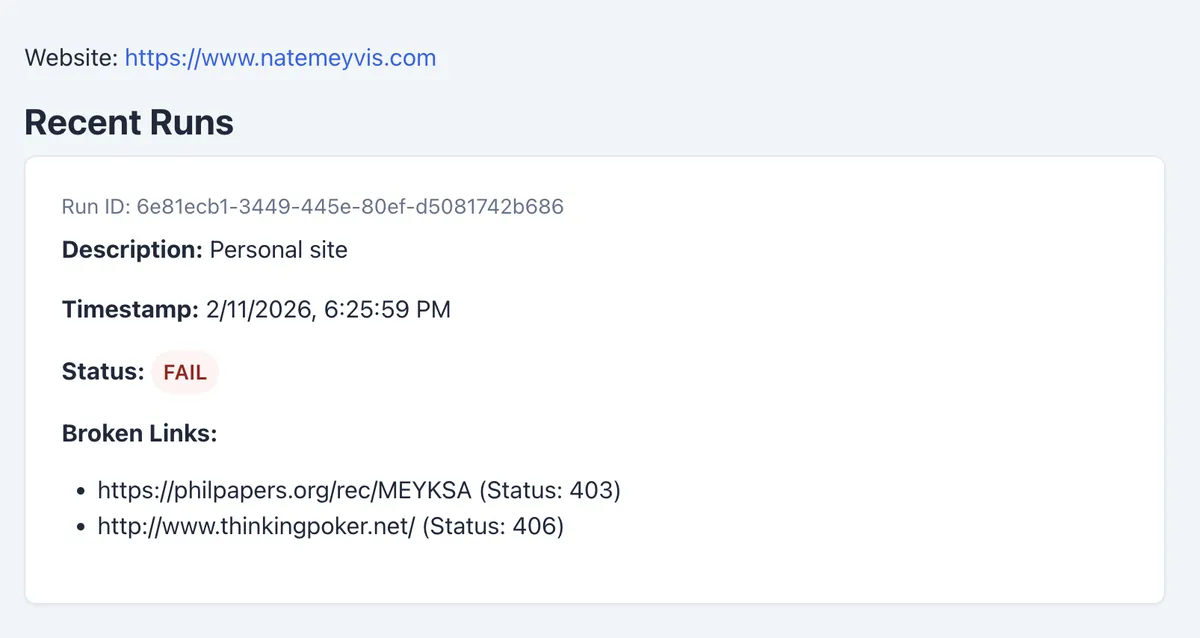

The vibecoded agentically engineered webapp is very simple: the user creates a configuration with a Web site, the system checks the Web site to see if all its links work, and it sends back a report on any broken links. It also emails the user if there's a broken link. The user can review the history of these checks or trigger a new check.

Some more misc. notes:

- I tried part of this one with the

geminiCLI, which was faster than Claude but made mistakes that Claude doesn't make. Maybe this is a matter of Claude writing in a Claude-friendly way; maybe it was just luck. Speed aside,geminipresented information in an extremely useful way (fewer, substantive, intelligible chunks on the screen), and I want to try it some more. - The bootstrapper is getting better, and I'm getting used to this magic.2 So a greater proportion of my time is spent on what seem like cumbersome steps: asking Claude to debug failed deploys, exercising basic user flows, and so on. Of course, these all make for good candidates for tooling improvements.

- Even if it feels cumbersome, though, a lazy algorithm ("lazy" in the sense of lazy deletion) for things like checklists is a good default for generating instructions for LLMs. Asking AI to anticipate problems and use cases leads to bloated Markdown files, and you really don't want those to be bloated. Moreover, augmenting a checklist with what I learn in a new project isn't very hard. So, when in doubt, I'll be keeping my Markdown files lean at first and adding to them as necessary.

- A link-checker is, in some sense, a bad candidate for vibecoding. It's full of edge cases, subtle logic, and judgment calls. But that's a better argument against trying to sell this than it is against trying to make it for myself: I know the narrow part of the domain I'm working on, and I know how to interpret the results. Meanwhile, the project has not only improved my AI bootstrapping but has also taught me a bit about the Web and HTTP.

As I have spare minutes, I'll improve and extend the tool. For now, though, I'll be happy with my email alerts and with reports like this: